Executive summary

Apr 10, 2026

min to read

Artificial intelligence (“AI”) is hailed and presented as a frontier and defining marker of innovation. Marketing is never far behind. Wanting to ride the tailwinds of this historical revolution, a new marketing trend commonly referred to as “AI washing” has emerged: promoting products and services as “AI-powered,” often with little substance behind the label. Misleading claims are nothing new; whenever a concept becomes commercially valuable, it tends to be overstated in competitive messaging. It is an old trick with a modern veneer.

In late January 2026, Competition Bureau Canada published a report addressing algorithmic pricing (the use of automated software and AI to set or adjust product prices in real time) and its implications for competition law. This publication forms part of the Bureau’s broader reflection on AI and market dynamics, a subject that was previously explored in the Bureau’s 2024 paper issued prior to its public consultation on AI and competition. In parallel, according to the Canadian Marketing Association, AI washing is more than a benign marketing strategy; it can reduce trust in emerging technologies and increase the risk of legal and regulatory penalties as well as reputational risk.

AI washing occurs when claims exaggerate or misrepresent the implication of AI in a product or service. Examples include presenting basic automation or rule-based software as AI, overstating the level of machine learning, autonomy, performance or security, or using vague language that implies guaranteed or superior outcomes without a factual basis.

A striking example of the risks posed by AI washing comes from Builder.ai, a London‑based startup once valued at over US$1 billion that promoted its platform as allowing users to build applications using advanced AI, making the process “as simple as ordering a pizza.” Subsequent reporting and accounts from former engineers revealed a sharply different reality: although the company marketed its service as AI‑driven, most of the work was carried out by human coders (sometimes hundreds of outsourced engineers in India) rather than by fully autonomous AI systems.

This gap between promotional messaging and the underlying technical reality did more than confuse customers; it is alleged that it influenced investor perceptions and contributed to financial and operational instability. As the discrepancies came to light, revenue projections were sharply revised downward, creditors seized assets, and the company ultimately entered insolvency proceedingsin 2025 , underscoring how exaggerated or unsubstantiated AI claims can distort markets and damage credibility.

This is not an isolated case, but part of a broader pattern that has emerged in recent years and continues to grow. Companies around the world have faced regulatory action for overstating or misrepresenting their use of AI.

Canadian and American companies are not immune to this problem either. In fact, Delphia Inc., a Toronto-based fintech startup, allegedly made false statements in U.S. SEC filings and press materials claiming it used advanced AI and machine learning powered by client data to predict market trends and generate investment advantages, when in reality it did not possess those AI capabilities. Global Predictions Inc., a San Francisco-based investment advisory company, allegedly made misleading statements about the use of its AI, stating they were the “first regulated AI financial advisor” and that they provided “expert AI-driven forecasts.” Both were charged by the SEC with civil penalties amounting to US$400,000. Another example is Workado, LLC, a software company that marketed an AI-powered tool that they purported could accurately decipher whether written text was generated by AI; however, the U.S. Federal Trade Commission (FTC) found no reliable evidence to support those statements and ordered the company to stop making such unsupported AI performance claims.

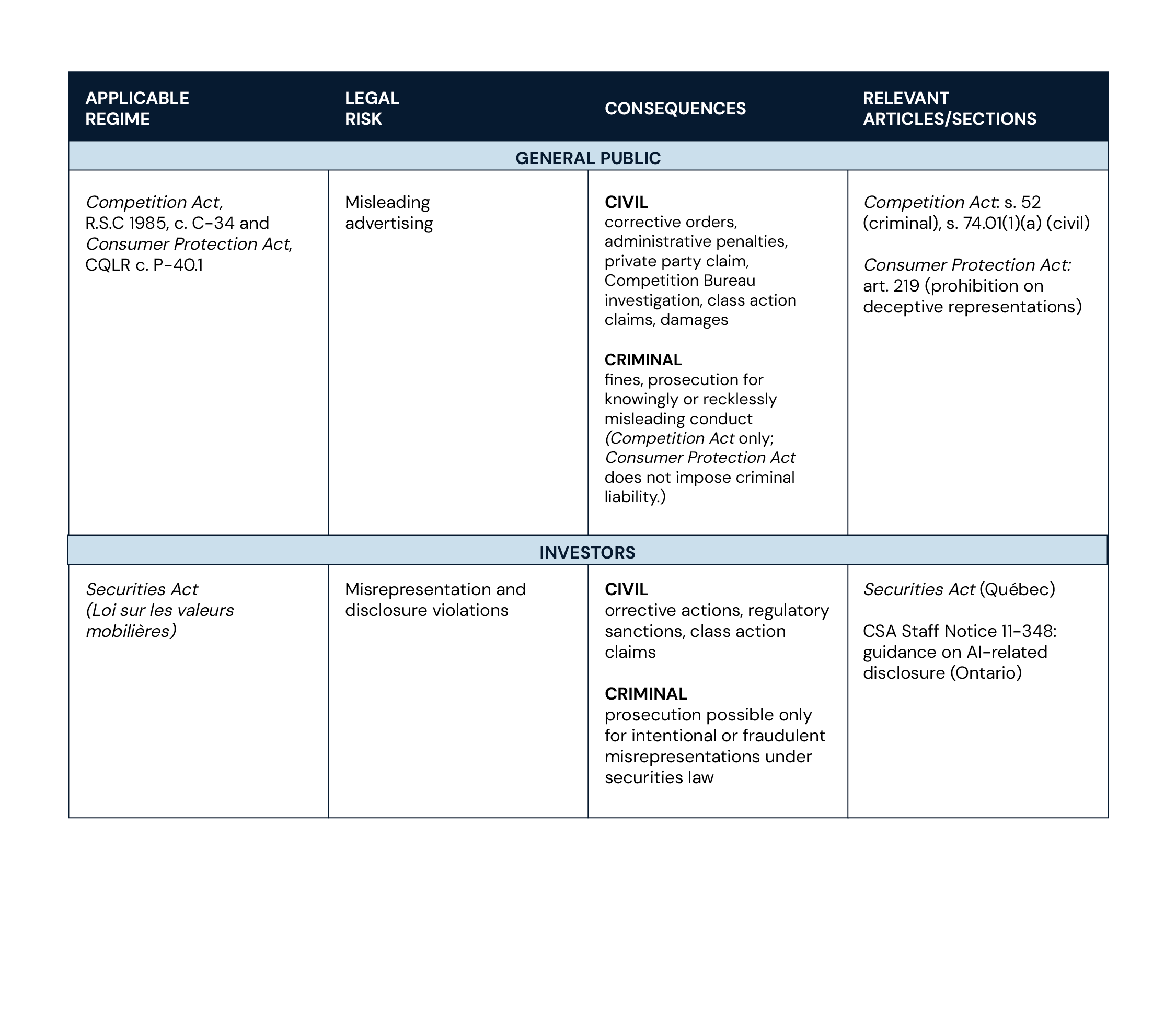

In Canada, companies’ obligations regarding disclosures or claims related to the use of AI and the risks arising from AI washing vary depending on the audience: statements directed at the general public may trigger a different set of legal requirements than those addressed to investors.

Claims Marketing claims aimed at consumers or the general public may fall under the Canadian Competition Act, R.S.C 1985, c. C-34 (“CA”) and the Québec Consumer Protection Act (“CPA”), which prohibit businesses from making false or misleading statements in a material respect in the promotion of a product, service or business interest.

Violations of the CA may result in both criminal and civil consequences. Under section 52 CA, it is a criminal offence to knowingly or recklessly make a materially false or misleading representation to the public for the purpose of promoting a product or business interest, which may lead to prosecution and the imposition of fines or other penalties upon conviction. In addition, section 36 CA opens the door to damages claims for loss or harm caused by conduct that contravenes the criminal provisions of the Act (including section 52 CA).

On the civil side, section 74.01(1)(a) CA addresses materially false or misleading representations as reviewable conduct, which may lead to corrective measures, administrative monetary penalties and other remedial relief. In certain circumstances, private parties (including businesses [e.g., competitors, suppliers, customers], trade associations or individuals) may also seek leave from the Competition Tribunal to bring their own application, where the Tribunal is satisfied that doing so is in the public interest, pursuant to section 103.1(6.1) CA.

These rules apply to any form of marketing communication likely to shape consumer behaviour, including advertisements, online promotions and comparable formats. Suspected breaches may be investigated by the Competition Bureau and can result in proceedings under the CA.

In Québec, the CPA, notably section 219 CPA, prohibits deceptive representations concerning goods or services and can also serve as a basis for a claim, including as a ground for a class action.

In the context of AI washing, embellishing a company’s AI features can carry serious legal and commercial consequences, potentially resulting in investigations, corrective orders and monetary penalties.

Statements about AI aimed at investors, shareholders or financial markets are governed by Canadian securities laws as well as reporting rules for public companies established by our stock exchanges, which prohibit false or misleading disclosure, or “misrepresentations,” and require that investors receive accurate information about a company’s business, risks and use of technology.

Under provincial securities acts across Canada, including Québec’s, a “misrepresentation” generally means an untrue statement of a material fact or the omission of a fact necessary to prevent a statement from being misleading. References to AI often signal innovation, efficiency or enhanced value and can significantly influence investor perceptions and decision making, making AI-related statements highly likely to be considered as material. Regulators have emphasized that AI-related claims must be treated with the same rigour as other material information: the Canadian Securities Administrators (CSA) expects issuers to provide balanced, substantiated explanations of AI use, including associated risks and operational impacts in continuous disclosure documents.

Misrepresentations or omissions of material facts in investor communications can trigger civil liability, regulatory sanctions, corrective actions or class-action claims, while intentional or fraudulent statements may lead to criminal prosecution. Beyond legal consequences, overstating AI capabilities can damage reputations, erode investor confidence and create financial and market setbacks. As emphasized by the Autorité des marchés financiers, the use of AI in the financial sector requires careful oversight and sound risk management to address the legal and operational risks it may create.

AI washing is more than a marketing misstep; it carries real legal, financial and reputational consequences. Companies that exaggerate AI capabilities risk investigation under competition, consumer protection and securities law, potential civil and criminal liability, and lasting damage to investor and consumer trust.

The path to responsible AI communication lies in substantiated claims, transparent disclosures and robust oversight, including documenting human involvement.

While the growing appeal of AI marketing lies in its ability to attract attention and generate buzz, companies must nonetheless resist the temptation to overstate their use of AI. BCF’s Competition and AI Practice groups can help your organization navigate this fast-moving landscape responsibly, including by helping you find the right balance between substance and trend, advising on sensible documentation and oversight in the use of AI, and providing support on marketing best practices.

The audio version of this article was generated using artificial intelligence. To learn more, please consult our policy on the use of AI.